This personal reflection was written by Yuxi Liang, a volunteer note-taker at the Knowledge4Change Tkaronto Hub’s Innovation Lab, “Centering Community Participatory Knowledge in the Age of AI,” held on January 30-31, 2026, at the Regent Park Community Centre. To learn more about the Innovation Lab, check out our event recap here!

Before participating in this two-day AI Innovation Lab, I only had some preliminary ideas about the event. It was supposed to be themed around artificial intelligence and might also discuss the development, impact, or risks of AI, but I didn’t have a very clear understanding of the specific format and content. During the two sessions I attended on the second day of the Innovation Lab, I gradually realized that the discussions were not approached purely from a technical perspective. Instead, through design, discussion, and creation, AI was placed in a more specific community context for reflection.

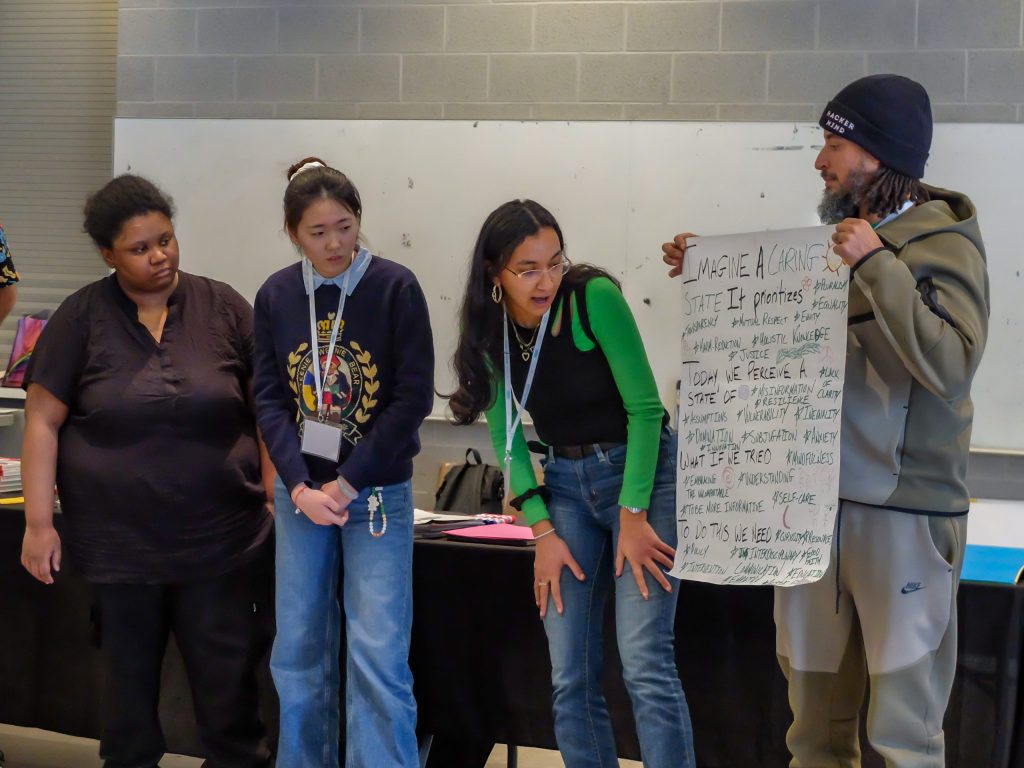

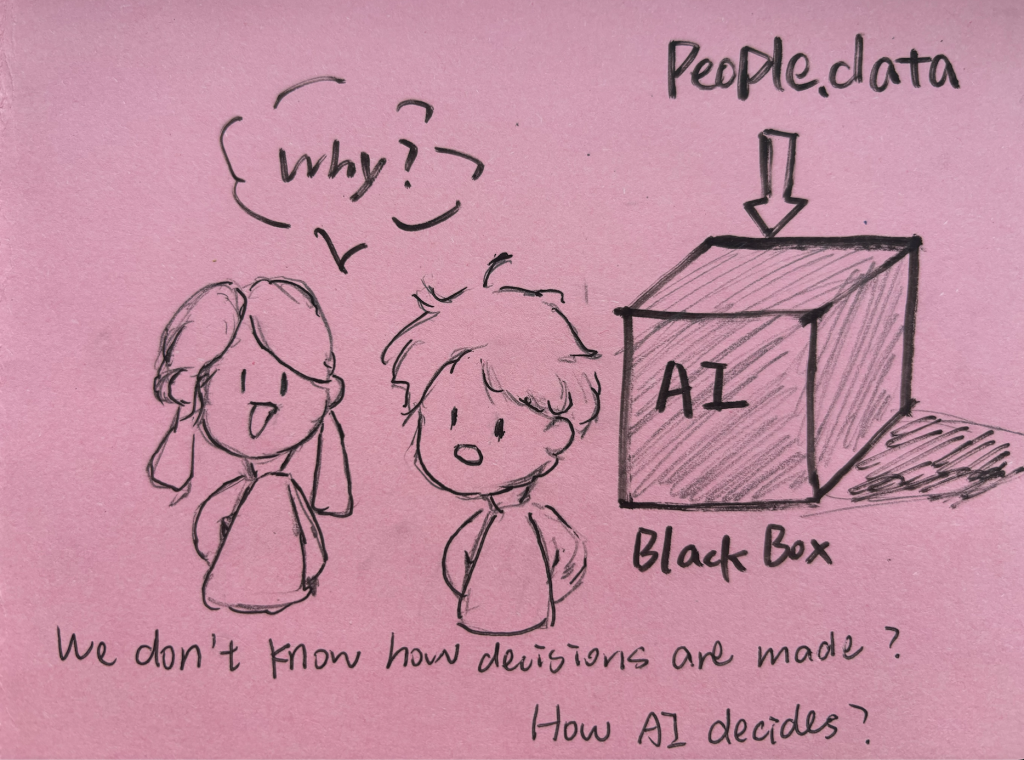

On the second day, one of the sessions was led by Alex Ryan, CEO of Synthetikos Inc., conducted in the form of a design sprint. Alex guided us to imagine what an ideal community would look like, what the current community is like, and what we could do to turn the community into one we envision. We were divided into groups and sat around large poster paper, jotting down these ideas one by one. However, this process was not as straightforward as I initially thought, revolving around the specific applications of AI. Instead, it returned to some more fundamental questions: what kind of community do we want to live in, and what concerns and expectations do we have about the existing situation. During the group discussions, we did not attempt to systematically analyze the social structure or propose complete solutions. Instead, we more often exchanged our feelings and intuitions. A recurring feeling in the discussions was that we were not clear about “how things are decided.” As a student, this feeling resonated strongly with me, because I am often affected by AI-related decisions, yet I am rarely told how those decisions are made or whether I can question them. Although this feeling, which was shared by the group and experienced personally was not immediately summarized into a specific concept, it persisted throughout the discussions and influenced our understanding of the relationship between community and AI.

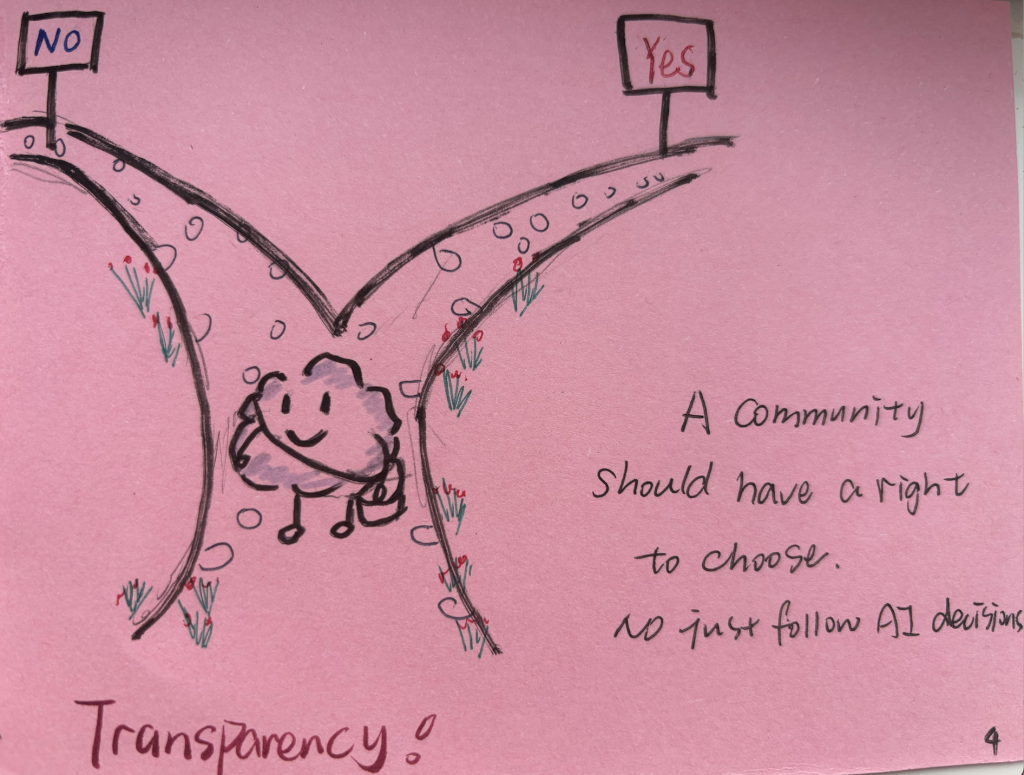

After each group discussion was concluded, Alex gathered everyone together for a sharing circle. Different participants began to share their own understanding of their ideal community. Some mentioned the need to preserve AI-free spaces, while others talked about restricting the use of AI to certain scenarios. During this process of listening to the sharings, the word “transparency” became particularly important to me. It was not presented as a unified conclusion but more like a keyword that responded to my inner unease, a feeling of the opacity of the decision-making process and uncertainty about the opportunities for participation. These reflections made me realize that transparency is not just about whether information is made public, but whether the community has the opportunity to understand, discuss, and intervene in decisions related to AI.

After the design sprint session ended, the activity continued under Karen Natalia Villanueva’s guidance. She continued the discussion about community that had been started earlier, leading us to understand, more deeply, the concept of community-based research. For me, this was not a concept that I could fully understand at that moment, but I gradually realized that what it wanted to emphasize was not “how we study the community,” but whether the community could participate in the raising, discussion, and expression of the problem. As discussions continued, I began to notice that this approach does not take the community on the position of being a passive recipient of help, but instead emphasizes collective discussion and shared decision-making. Based on this understanding, the activity moved on to zine production, converting the previous discussions into a more open form of expression.

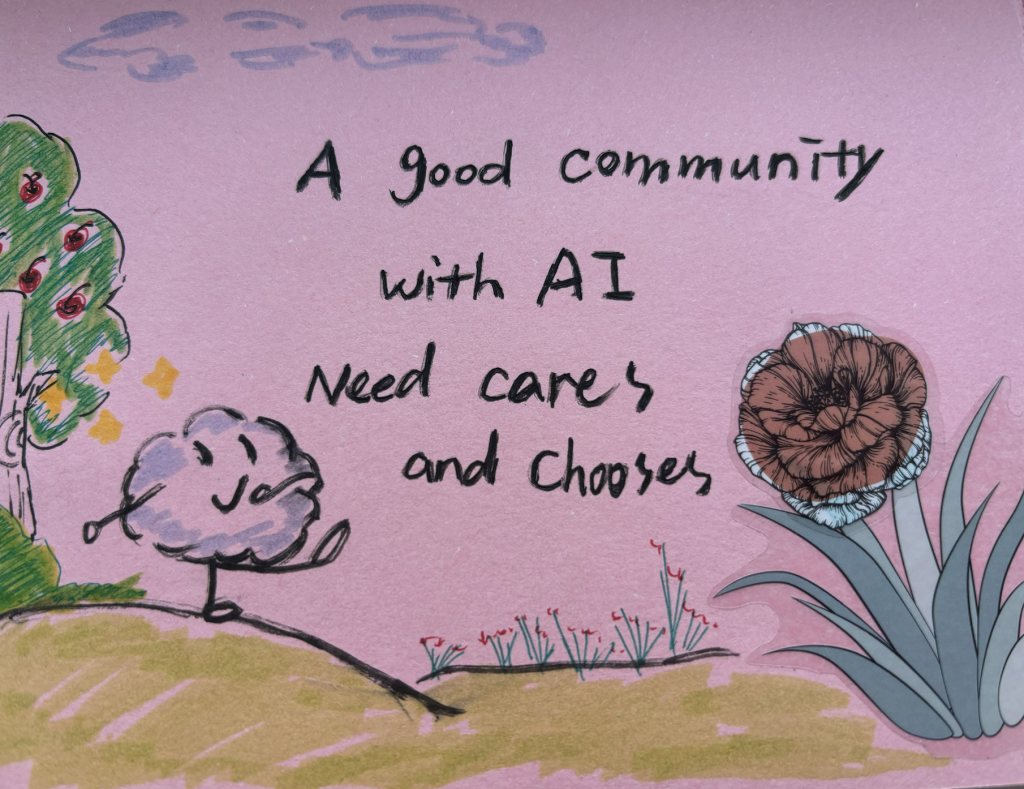

During the process of creating the zine, I began to truly pause and reflect on what approach I would take to express these ideas that were not yet fully formed. I named my zine “A Community That Knows How to Coexist with AI.” In formulating this theme, I came to realize that I wasn’t really looking for a “most advanced” or “most efficient” AI future, but rather whether the community still had the ability to judge and choose.

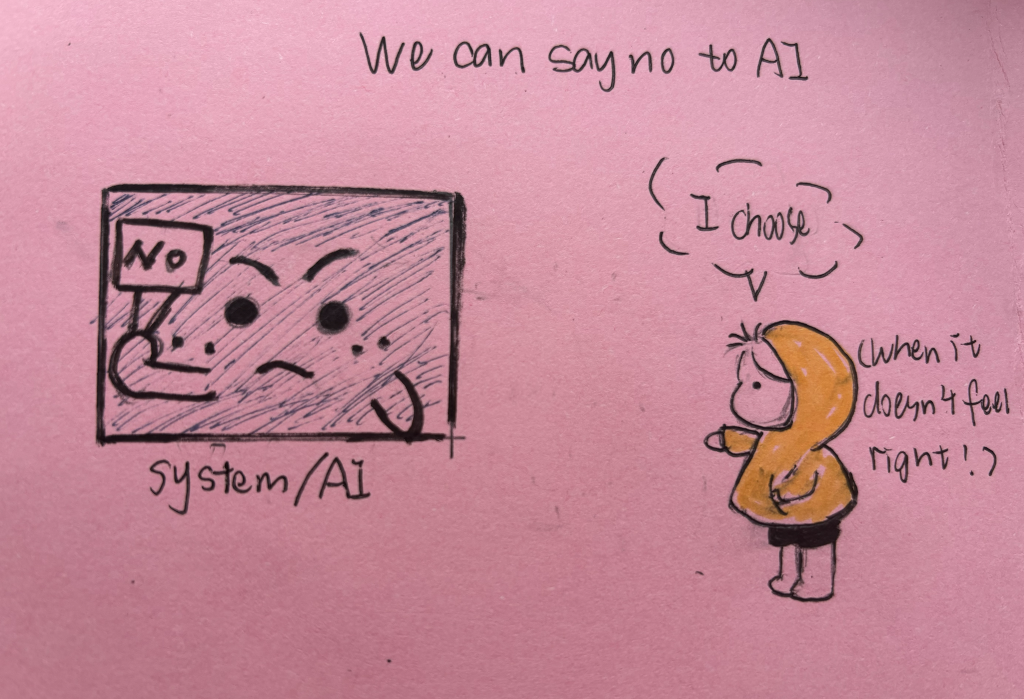

This awareness gradually emerged as I wrote down my thoughts. I noticed that I kept hesitating over some words. I once wrote down “efficiency,” then crossed it out and switched to expressions like “choice.” I later realized that this wasn’t a simple matter of wording, but rather a change in my focus on how I used AI. Rather than prioritizing how quickly things are completed, what matters more to me is whether the community still has space to pause, to discuss, and even to reject certain technological interventions.

This shift felt especially significant to me as a student, as efficiency is often emphasized in learning environments, sometimes at the expense of reflection, discussion, and the ability to slow down. For me, the right to choose means preserving a process of judgment, which the word “efficiency” cannot truly convey.

As these ideas gradually became clear, I began to write down some assumptions about the boundaries of AI usage. For instance, AI should not be used to make judgments on people’s moral or cultural values. Decisions generated by AI should be given time and not be implemented immediately. They should be discussed by community members before a decision is made on whether to adopt them. It is also very important that the process of using AI can be recorded and held accountable. These ideas are not a complete plan, but more like some intuitions and trials in the process of learning to think. If a choice really needs to be made, I would prefer to slow down the decision-making process rather than advancing it with an emphasis on efficiency.

Looking back at these two sessions, I did not form a complete and definite judgment regarding AI. However, through the discussions during the design sprint, developing an initial understanding of community-based research, and the creative process of zine-making, I gradually realized that when I was thinking about AI, what truly mattered to me was not the speed of technological development, but whether the community still had the space for understanding, discussion, and choice in this environment. Rather than providing clear answers, the lab left me with questions that continue to unfold. I began to pay more attention to which judgments need to be accelerated, which processes need to be slowed down, or even temporarily put on hold when facing AI. For me, this ongoing reflection has not yet been completed, but it has become the most significant outcome of this experience and will continue to shape how I think and engage with artificial intelligence in the future.

Yuxi Liang is an undergraduate student at the University of Toronto Scarborough studying International Development. She is interested in global inequality, development narratives, and the social impacts of emerging technologies such as artificial intelligence. Her academic interests focus on how knowledge, technology, and institutions shape the ways people understand development. Through her studies and community engagement, she enjoys reflecting on how these ideas relate to real-world experiences and conversations about development and social change.